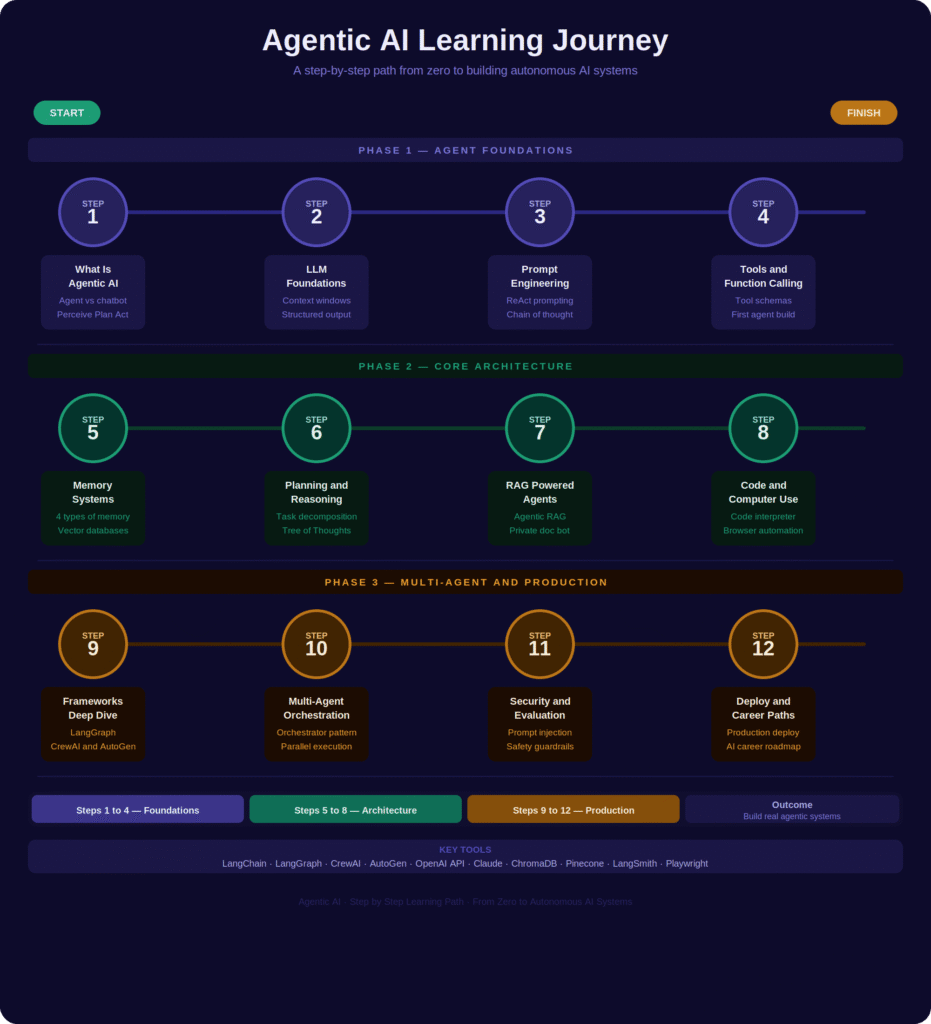

Agentic AI is quickly moving from research labs into real products that people use every day. Unlike a chatbot that answers one question and stops, an agentic AI system can plan a goal, take actions, use tools, and keep working until the job is done. If you are an engineering student, a developer, or simply someone curious about where AI is heading, this roadmap will give you a clear and honest path from the very basics to building multi-agent systems that work in the real world. By the end of this article, you will know exactly what to learn, in what order, and why each step matters.

Table of Contents

- What Is Agentic AI and Why It Matters

- The Core Architecture of an AI Agent

- Memory, Planning, and Knowledge Retrieval

- Agents That Code, Browse, and Act on Computers

- Building Multi-Agent Systems with Real Frameworks

- Evaluating, Securing, and Deploying Agents to Production

What Is Agentic AI and Why It Matters

Most people experience AI as a question and answer tool. You type something, the model replies, and the interaction ends there. Agentic AI works very differently. An agent receives a goal, breaks it into steps, takes actions in the real world, checks the results, and keeps going until the task is finished. This loop of perceiving, planning, acting, and reflecting is what makes an agent fundamentally different from a chatbot.

The practical difference is significant. A chatbot can explain how to book a flight. An agent can actually book it for you by checking availability, comparing prices, filling out the form, and sending a confirmation. Tools like Anthropic Claude Agents, OpenAI Operator, and Devin (the AI coding assistant) are early but real examples of this capability reaching everyday users. As these systems become more reliable, engineers who understand how to build and control agents will be among the most sought-after professionals in the industry.

The good news for students is that the barrier to entry has dropped considerably. Agent frameworks are well documented, the APIs are affordable to experiment with, and a basic working agent can be built with just a few dozen lines of Python. This roadmap is designed to take you through that journey in a structured and practical way.

The Core Architecture of an AI Agent

Every AI agent, no matter how complex, is built on three things: a language model that does the reasoning, a tool interface that lets it take actions, and a prompting strategy that shapes its behavior. Understanding how these three pieces fit together is the most important foundation you can build.

The language model is the brain of the agent. It reads the task, generates a plan, decides which tool to call next, and interprets whatever result comes back. This is why a solid grasp of how LLMs work, including tokenization, context windows, and structured output formats like JSON, is essential before you start building. A model that follows instructions reliably is what makes an agent predictable and safe.

Tool use, also called function calling, is how the agent interacts with the world beyond text. You define tools such as a web search function, a calculator, or a database lookup, and describe each one to the model using a structured schema. The model then decides which tool to call, passes the right parameters, and uses the result to continue its reasoning. A common first project at this stage is building a weather and news agent that queries two separate APIs based on what the user asks.

The prompting layer is what ties everything together. ReAct prompting, which stands for Reason and Act, is the most widely used pattern in practice. The model is instructed to think out loud before taking each action, which produces a reasoning trace you can actually read and debug. A well-designed system prompt that defines the agent’s role, constraints, and output format gives you control over how the agent behaves in any situation.

Memory, Planning, and Knowledge Retrieval

An agent that forgets everything after each step is not very useful for real tasks. Memory is what allows agents to handle long and complex goals across multiple actions and even multiple sessions. There are four types of memory that matter in practice: in-context memory held in the active prompt, external memory stored in a vector database, episodic memory that logs past actions and their outcomes, and semantic memory that encodes domain knowledge as retrievable embeddings. Most production agents combine at least two of these.

Planning is the other half of this equation. A simple agent executes steps one after another in a fixed order. A more capable agent uses a directed acyclic graph to identify which steps are independent and can run in parallel, which cuts down execution time significantly. More advanced reasoning patterns like Tree of Thoughts allow the model to explore multiple solution paths at once and pick the most promising one, which is especially useful for research and complex decision-making tasks.

Retrieval-Augmented Generation adds a private knowledge layer on top of everything. Instead of relying only on what the language model learned during training, a RAG-based agent retrieves relevant documents from your own data at query time and injects them into the prompt before responding. Agentic RAG takes this further by letting the agent decide when to retrieve, what to search for, and how many retrieval rounds it needs before it has enough information to act. A practical build at this stage is an agent that answers questions from a collection of your own PDF documents using embeddings and vector search.

Agents That Code, Browse, and Act on Computers

Some of the most powerful agentic systems today do not just generate text. They execute code, operate browsers, and interact with software the same way a human would. This is a meaningful shift in what AI can actually accomplish on your behalf.

A code interpreter agent works in a loop: it writes code to solve a problem, runs it in a sandboxed environment, reads the output or error message, and revises until the task is done correctly. This is how OpenAI’s Code Interpreter and similar tools work internally. A satisfying demo at this stage is giving the agent a CSV file and asking it to produce a statistical summary with charts. It writes the Python code, runs it, checks the result, and presents the final output without any manual steps from you.

Computer-use agents go further by giving the model a virtual mouse and keyboard. Using a screenshot as visual input and outputting click coordinates or keystrokes, these agents can operate any application, not just ones that expose an API. Anthropic’s computer-use demonstrations and OpenAI’s Operator both show agents navigating browsers, filling out forms, and managing desktop software. This technology is still maturing, but the direction is clear: any task a human can perform on a computer is in principle something an agent can be built to handle.

Browser automation agents sit between code agents and full computer use. They interact with websites through structured browser APIs like Playwright, which is more reliable than pixel-based control. A practical student project here is an agent that searches multiple job boards, filters results by criteria you specify, and compiles everything into a clean formatted report, completing in under two minutes what would take a human an hour.

Building Multi-Agent Systems with Real Frameworks

A single agent is powerful, but many real-world tasks are better handled by a team of specialized agents working together. Multi-agent systems allow you to compose agents that each do one thing well, coordinated by an orchestrator that manages the overall workflow. This is the architecture behind the most capable AI automation systems being built today.

Three frameworks dominate this space right now. LangGraph models agent workflows as stateful graphs where nodes represent actions and edges represent transitions, making it ideal for complex non-linear workflows. CrewAI uses a role-based approach where you define agents with specific roles like Research Analyst or Content Writer, assign them tasks, and let the framework handle delegation and collaboration between them. AutoGen from Microsoft Research structures multi-agent interaction as a programmable conversation where agents message each other in a loop until the goal is resolved. Each framework has its strengths, and learning all three gives you the flexibility to pick the right tool for each problem.

The orchestrator and subagent pattern is the most practical starting point for beginners. A supervisor agent receives the top-level goal, breaks it into sub-tasks, and assigns each one to a specialist. The specialists complete their work and return results to the supervisor, which combines everything into a final output. A strong hands-on project at this level is a three-agent pipeline where one agent researches a topic, another writes an article based on those findings, and a third reviews it for accuracy and clarity. This single project teaches orchestration, inter-agent communication, and task delegation all at once.

Evaluating, Securing, and Deploying Agents to Production

Getting an agent working in a development environment is one milestone. Shipping it reliably to real users is a completely different challenge, and one that most beginner tutorials skip over entirely.

Evaluation is the first discipline to get right. Unlike a single model response, an agent’s performance is measured across multiple dimensions: did it complete the task, how many steps did it take, what did it cost in tokens, and how well did it recover from errors. Tools like LangSmith and Arize Phoenix provide full execution traces showing every tool call, every model response, and every decision branch the agent took. This visibility is essential when debugging agents that fail silently or take unnecessarily long paths to a simple answer.

Security is a serious and often overlooked concern in agentic systems. The most significant threat is prompt injection, where malicious content embedded in a webpage or document contains hidden instructions that attempt to redirect the agent’s behavior. For example, an agent browsing the web might encounter a page with invisible text telling it to send user data to an external address. Defenses include input sanitization, strict output schema enforcement, sandboxed tool execution, and human-in-the-loop approval gates for any irreversible action such as sending emails, making purchases, or deleting files.

Deploying to production introduces real engineering challenges around latency, cost, and reliability. Long-running agent tasks can consume enormous numbers of tokens, so cost management is not optional. Agents need robust error handling and fallback strategies for when tools return unexpected results or fail entirely. Connecting agents to real business workflows through Slack, Gmail, CRMs, and internal databases requires careful API and authentication management. The final milestone of any serious agentic AI course is deploying a working agent that connects to at least one real external service and handles failures gracefully, which is precisely what professional AI engineers build every day.

Key Takeaways

- Agentic AI operates in a Perceive, Plan, Act, and Reflect loop that enables autonomous goal completion far beyond what chatbots can do.

- Every agent is built on three layers: an LLM brain, a tool-use interface through function calling, and a prompting strategy where ReAct is the most common pattern.

- Memory in four forms, in-context, external vector databases, episodic, and semantic, gives agents persistence and the ability to handle long tasks.

- RAG supercharges agents with private knowledge, and agentic RAG lets the model decide when and what to retrieve on its own.

- Code agents and computer-use agents can execute real actions including writing scripts, browsing the web, and operating desktop software.

- Multi-agent frameworks like LangGraph, CrewAI, and AutoGen enable teams of specialist agents coordinated by an orchestrator.

- Security and evaluation are non-negotiable for production agents, with prompt injection, cost management, and human-in-the-loop gates being the three most critical concerns.

FAQ

What is the difference between an AI agent and a regular chatbot?

A chatbot responds to a single input and stops. An AI agent receives a goal and autonomously takes a sequence of actions including calling tools, searching the web, writing and running code, and remembering context until the goal is complete. Agents are goal-driven and action-oriented while chatbots are response-driven and stateless.

Do I need to know Python to learn Agentic AI?

Basic Python is enough to get started and you do not need to be an expert. If you can write functions, use libraries, and read error messages, you have a sufficient foundation. Frameworks like LangChain, CrewAI, and AutoGen are well documented and designed for developers at the intermediate beginner level, with most agent builds requiring between 30 and 80 lines of Python.

What are the best frameworks for building AI agents?

The three most widely adopted frameworks are LangGraph for stateful and complex workflows, CrewAI for role-based multi-agent teams, and AutoGen for conversation-driven agent programming. For no-code or low-code agent building, Flowise and Dify are excellent starting points. The right choice depends on your use case and LangGraph, CrewAI, and AutoGen each serve different architectural needs.

How is Agentic AI different from traditional automation?

Traditional automation follows fixed pre-programmed steps and breaks when conditions change unexpectedly. Agentic AI can reason about unexpected situations, adapt its plan dynamically, handle ambiguous instructions in natural language, and use judgment to choose between multiple possible actions. This makes agents resilient and flexible in ways that rule-based scripts simply cannot match.

Thanks for your time! Support us by sharing this article and exploring more AI videos on our YouTube channel – Simplify AI

Leave a Reply